AI coding tools like GitHub Copilot, Cursor, and IDE-integrated assistants have transitioned from novelty to standard infrastructure. According to GitHub’s 2023 Octoverse Report, developers using AI assistants complete tasks 40–55% faster. For CTOs facing aggressive delivery cycles, the productivity gains are undeniable.

However, velocity without governance creates a “Quality Paradox.” Empirical research indicates LLM-assisted code contains approximately 1.7× more defects than human-written code, particularly in non-linear business logic and security-sensitive components. This gap translates directly into mounting technical debt, delayed releases, and SOC 2/HIPAA compliance exposure.

This article examines the critical failure modes of AI-generated code, quantifies their business impact, and outlines the Zero-Trust Governance Framework validated by InApps across enterprise engagements.

Why AI Code Fails Differently Than Human Code

LLM-generated defects are uniquely dangerous: they often produce “Syntactically Correct, Logically Flawed” output. Models prioritize statistical probability over architectural reasoning.

For CTOs, the risk is not that AI tools are unreliable. The risk is that their failure modes are systematically different from human failure modes, and most quality processes are calibrated for the latter.

Four Critical Failure Modes

-

Non-Local Logic Deviations (+75% in complex flows)

Coding assistants reason locally. They generate implementations correct within a narrow scope but fail when interacting with broader application state. Microsoft Research identifies this as the leading cause of production incidents in AI-assisted codebases – precisely because these defects pass test suites that were also model-generated.

InApps case: During a Node.js microservice refactor for a B2B SaaS platform processing roughly 50,000 API calls per day, a Copilot-generated async error handler silently swallowed promise rejections in a specific retry branch. The function succeeded in 99.3% of calls. The failure only appeared under a precise combination of network latency and database lock contention – conditions absent from staging. The defect ran live for six weeks before a customer escalation surfaced it.

-

Security Vulnerability Injection (+174% vs. reviewed code)

OWASP’s LLM Top 10 identifies a consistent tendency for models to reproduce insecure patterns from training data – hard-coded credentials, deprecated cryptography, insufficient input validation. Snyk’s independent analysis found AI-generated code introduces exploitable flaws at rates up to 2.74× higher than human-written code under standard peer review.

During a pre-launch security review for a US-based SaaS platform serving mid-market HR and payroll workflows – a compliance-sensitive environment subject to SOC 2 Type II requirements, engineers identified three instances of AI-generated JWT validation logic omitting algorithm verification – a known token forgery vector. The code passed all functional tests. It was caught only because the CI/CD pipeline included a Semgrep ruleset specifically targeting JWT misuse patterns.

-

Happy-Path Bias and Missing Edge Coverage

LLMs heavily weight common execution paths and systematically underweight error handling for null values, timeouts, constraint violations, and concurrent access. This bias is especially dangerous in financial and healthcare systems where edge cases represent regulatory-significant transactions.

InApps case: A fintech client processing over $4M in daily payment volume deployed an AI-assisted reconciliation module that handled all standard settlement flows correctly. Module handled all standard flows correctly but contained no handling for partial settlement responses – approximately 0.2% of transactions. Over four months, this produced silent ledger discrepancies. The fix took two days. The audit took three weeks.

-

Technical Debt via Code Bloat (up to 3× larger implementations)

Gartner projects that by 2026, 30% of new enterprise technical debt will stem from ungoverned AI output. AI-generated modules often show 20–40% higher cyclomatic complexity scores.

Teams scaling AI-assisted development benefit from quality gates embedded at the engagement level rather than dependent on individual developer discipline – enforced consistently across all modules regardless of who generated the code.

The Testing Trap

Teams using AI to generate both implementation code and test suites face a structural problem: LLMs treat their own output as ground truth, producing assertions that confirm expected behavior rather than challenge it – creating the illusion of green. Research across multiple engineering groups indicates over 30% of model-generated unit tests fail to compile due to incorrect imports or mismatched signatures.

InApps rule across all AI-assisted engagements: no AI-generated test suite ships without a human review pass focused specifically on boundary coverage and assertion logic.

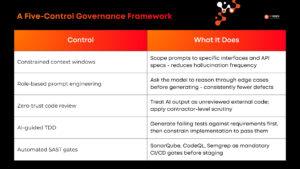

A Five-Control Governance Framework

“Based on patterns observed across 50+ enterprise engagements, InApps has codified five mandatory governance controls for AI-assisted development. Each control targets a specific failure mode identified in the sections above — from prompt governance through to automated security gates.

The InApps Five-Control Governance Framework

These five controls operate as a layered system — no single control is sufficient in isolation. Teams that implement all five consistently report defect escape rates returning to baseline human-code benchmarks within two release cycles.

Conclusion

AI coding tools represent a durable productivity advantage. The question is not whether to adopt them – it is whether your quality infrastructure is calibrated for their specific failure modes.

The teams capturing sustained value from AI-assisted development are those implementing structured verification at every layer: prompt design, code review, testing strategy, and automated security analysis. The failure modes in this article are not hypothetical. They are patterns InApps has observed, measured, and built controls around across fintech, healthtech, and SaaS environments.

Is your engineering team shipping fast but struggling with technical debt? Let’s build you a hybrid AI development model that scales without compromise.

Book a free architecture consultation

Frequently Asked Questions

- Why does AI-generated code increase the “Defect Escape Rate”?

It creates Semantic Confirmation Bias. LLMs often generate implementation and tests based on the same probabilistic logic, missing edge cases like race conditions or database locks. InApps research shows a 1.7× defect surge in distributed systems where AI fails to simulate runtime environmental latency.

- What is the real Total Cost of Ownership (TCO) of AI coding?

While coding speed rises 40–55%, McKinsey indicates maintenance costs can jump 25–40%. A hallucinated security flaw in production costs 100× more to fix than one caught during review. If refactoring cycles triple, your net productivity gain becomes negative

- How to balance “Velocity” and “Security” (SOC 2/HIPAA)?

Deploy Automated Guardrails. Integrate SAST/DAST (SonarQube, Snyk) directly into CI/CD. InApps enforces a “Human-in-the-loop” mandate for high-risk modules (Auth, Encryption, Persistence) to ensure speed doesn’t compromise compliance.

- Does AI accelerate Technical Debt?

Yes. AI prioritizes “immediate execution” over “architectural integrity,” leading to Cyclomatic Complexity and “Code Bloat.” Gartner predicts 30% of enterprise tech debt will be AI-driven by 2026. CTOs must shift from a “Coding-first” to a “Review-first” engineering culture.

Published: 31st March 2026 | Last reviewed: 31st March 2026 | By: InApps AI-Powered Marketing Team

Let’s create the next big thing together!

Coming together is a beginning. Keeping together is progress. Working together is success.