For an SME CTO in 2026, engineering velocity is no longer a competitive advantage – it’s a survival requirement. The integration of Generative AI into the software development lifecycle (SDLC) has promised a paradigm shift in productivity. However, as the initial hype settles, technical leaders are realizing that AI, when mismanaged, does not eliminate costs – it simply defers them, often compounding them into significant technical debt.

Why Does AI-Driven Velocity Often Lead to Higher TCO for SME Engineering Teams?

The allure of AI is immediate output, but the real Total Cost of Ownership (TCO) lies in systemic alignment. In early 2025, a Series B e-commerce SaaS company in the US/EU/AUS region shipped a complex feature in three weeks using an AI-first approach in their offshore team – a record delivery.

Six months later, the feature failed under production load. The root cause was architectural fragmentation: multiple AI-generated service contracts that didn’t align. Fixing it required 340 hours of senior engineering time – a figure drawn from InApps’ internal project postmortem data. This scenario highlights a critical truth: engineering teams spend roughly 70% of their time on maintenance and integration. AI code generation, without strong human oversight, is optimized for local correctness, not long-term systemic health.

What Are the Critical Risks of Ungoverned AI Code in Offshore Development Centers?

When you leverage AI inside an ODC without a defined governance boundary, three high-stakes failure modes surface:

Architectural Fragmentation and Erosion: AI models solve problems based on immediate context, lacking a holistic view of evolving SaaS architecture. Without senior orchestration, teams inherit codebases with duplicated logic and rigid data models.

Intellectual Property and Licensing Exposure: AI may reproduce code with copyleft obligations (GPL, AGPL). This is a material risk during Series C or M&A due diligence. The NIST AI Risk Management Framework identifies IP provenance and third-party dependency governance as primary risk factors in AI-assisted pipelines.

Security Debt via Stale Dependencies: AI models have training cut-offs and may suggest libraries with known CVEs. Mandatory Static Application Security Testing (SAST) is required to catch what automated AI pipelines miss.

For insights into handling these risks, review our client case studies.

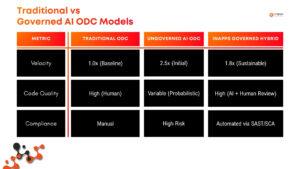

Comparison: Traditional vs. Governed AI ODC Models

How Does the “Architect-Orchestrator” Model Optimize ODC Performance?

The most optimized ODC strategy for 2026 is the Governed Hybrid Model. In this framework, engineers function as Architect-Orchestrators who define boundaries and validate output rather than just writing syntax. For a baseline on these models, see what an ODC is and how it works.

This model relies on three non-negotiable controls:

- Architecture-First Sprints: Senior human architects define domain boundaries before any prompt is written.

- Mandatory Peer Review & SAST: Every AI-assisted commit passes through automated scanning and senior review.

- Minimum Viable Team (MVT): A cost-effective hybrid ODC requires at least one senior architect, two mid-level engineers, and one QA lead.

Explore our team configurations on our about page or read about offshore team structures and tradeoffs.

What is The Measurable ROI of a Governed AI-Powered ODC?

Based on InApps’ internal delivery data across ODC engagements in the US, EU, and Australian markets, this model delivers measurable ROI in three areas:

- Boilerplate Elimination: Offloading CRUD layers and unit test skeletons to AI recovers 12–15 hours of senior capacity per sprint, based on average velocity tracking across active InApps ODC engagements.

- Rapid Prototyping: Drastically reduces the “cost of being wrong” by validating product directions faster.

- Accelerated Junior Developer Output: Under structured mentorship, junior developers have increased commit rates by 43% – measured via Git analytics across 12 InApps-managed ODC teams over a 6-month period.

To understand the staffing behind these results, see our offshore product development services.

Is AI-Generated Code Compliant with SOC 2, HIPAA, and ISO 27001?

Yes. Compliance frameworks do not distinguish between AI-generated and human-written code – they evaluate the operating controls around it. Governance is the variable. By maintaining human review and SAST pipelines, AI-assisted development meets the highest standards of fintech and healthcare regulation.

How can CTOs Prevent AI Hallucinations from Reaching Production?

Preventing hallucinations requires three specific integration boundaries:

- Architecture Conformance Testing: Against documented domain contracts.

- Integrated SAST Pipeline Analysis: To catch logic and security flaws.

- Mandatory Senior Sign-off: Human review at the integration boundary is non-negotiable. AI tools cannot validate their own context.

Conclusion: The fastest path to production is the one with the fewest surprises. For an SME CTO, pioneering the future means building the governance that makes AI-driven velocity sustainable.

Frequently Asked Questions

Is AI-generated code safe in regulated industries like fintech or healthcare?

Yes – when it passes mandatory human review, SAST analysis, and architecture conformance testing before deployment. SOC 2 Type II, HIPAA, and ISO 27001 frameworks evaluate the controls around the code, not who or what wrote it.

What’s the minimum team size for a hybrid AI ODC to be cost-effective?

Four: one senior architect, two mid-level engineers, one QA lead. At this configuration, AI tooling functions as a fifth contributor on volume tasks while the human team owns architecture and compliance. See how InApps staffs these teams on our ODC services page.

What does a single ungoverned AI commit actually cost an SME engineering team?

More than most CTOs budget for. Based on InApps’ internal postmortem data, a single architectural misalignment from an ungoverned AI commit required 340 hours of senior engineering time to remediate – exceeding the entire sprint budget it was meant to accelerate. Beyond hours, the costs compound: delayed releases, emergency QA cycles, and compliance re-audits. For a Series B or C company on an 18-month runway, one unreviewed AI commit in a critical service boundary is not a code quality issue — it is a business continuity risk.

To discuss how InApps structures hybrid ODC engagements for regulated-industry clients: inapps.net/offshore-development-center

Published: March 25, 2026 | Last reviewed: March 25, 2026 | By: InApps AI-Powered Engineering Strategy Team

Let’s create the next big thing together!

Coming together is a beginning. Keeping together is progress. Working together is success.