- Home

- >

- Software Development

- >

- A Lightweight Service Mesh for Kubernetes – InApps 2022

A Lightweight Service Mesh for Kubernetes – InApps is an article under the topic Software Development Many of you are most interested in today !! Today, let’s InApps.net learn A Lightweight Service Mesh for Kubernetes – InApps in today’s post !

Read more about A Lightweight Service Mesh for Kubernetes – InApps at Wikipedia

You can find content about A Lightweight Service Mesh for Kubernetes – InApps from the Wikipedia website

Amid all the talk about service mesh this week at KubeCon + CloudNativeCon 2017, the company behind the Linkerd project unveiled a version specifically for Kubernetes called Conduit.

Open source Linkerd creates a separate communication layer to transparently handle things like service discovery, load balancing, failure handling, instrumentation, and routing of services for cloud-native applications. It’s a project for the Cloud Native Computing Foundation, for which Kubernetes is the orchestrator of choice.

Built on components like Finagle, Netty, Scala, and the JVM, Linkerd was great at scaling up, but not at scaling down for environments with limited resources, such as sidecar-based Kubernetes deployments, explained William Morgan, CEO of sponsor company Buoyant.

When Linkerd was released in 2015, the orchestration market was fragmented, with a lot of people on Nomad, Mesos, Kubernetes and others who hadn’t decided what to do.

“With Linkerd, we were very explicit about making as many integrations as possible,” he explained. Fast forward two years and Kubernetes is dominating the orchestration landscape. While Linkerd has many customers using the alternatives, Kubernetes is the fastest-growing audience and the company wanted to specifically address it.

He said the company built Conduit by listening to customers.

“Now that people have learned about service mesh and the value it could provide, what they wanted was the benefits of Linkerd, but in a package that was extremely small and lightweight. They wanted security primitives in there from the ground up,” he said.

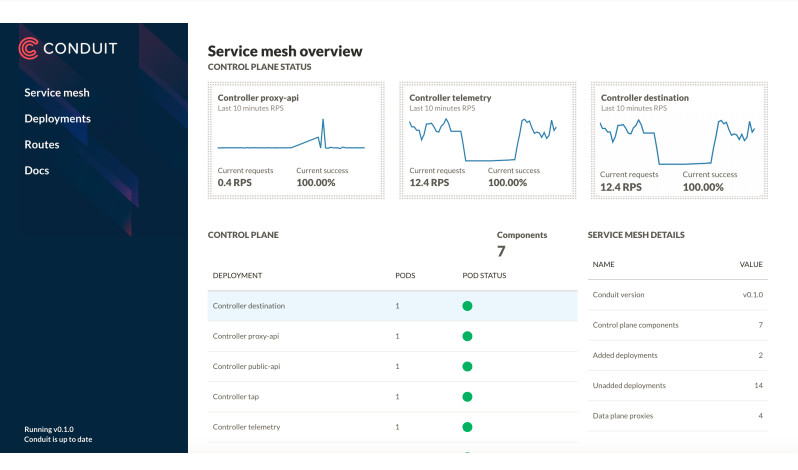

The Conduit Kubernetes service mesh is deployed on a Kubernetes cluster as a data plane made up of lightweight proxies, which are deployed as sidecar containers alongside your service code, and a control plane of processes that coordinate and manage these proxies.

A single Conduit proxy has a sub-millisecond p99 latency and runs with less than 10mb RSS.

The data plane carries application request traffic between service instances. The proxies transparently intercept communication to and from each pod, and add features such as retries and timeouts, instrumentation, and encryption (TLS), as well as allowing and denying requests according to the relevant policy.

The control plane also is a source of aggregated metrics.

The data plane is written in Rust and the control plane in Go.

“Rust gives us the ability to write native code, so it’s about as fast as you can write software to be, but it also guarantees memory safety. That means we sidestep a bunch of issues around buffer overflow, CVEs and cloud leaks — they’re not even an issue for the data plane. That was really important to us because the data plane is running deep inside your stack. It’s exposed to PII (personally identifiable information), HIPAA data, credit-card information,” Morgan said.

Go was a natural choice because it’s so common in the Kubernetes community, an easy language to get started with, making the barrier to community contribution is low.

The control plane API is designed to be generic enough to allow other tools to be built on top. While the initial release does not yet support custom functionality, in the future, gRPC plugins can be built that run as part of the control plane, without needing to recompile Conduit.

Humans interact with the service mesh through a command-line interface (CLI) or a web app that you use to control the cluster.

Kubernetes provides a natural way to use the sidecar approach, which is necessary to enforce some types of advanced security policy, Morgan said.

“To be a sidecar, you want to be as tiny as possible. You’re not really handling that much traffic, just the traffic for an individual instance, but it has to do it reliably and be really small.”

The announcement covered Version 0.1. “It’s so alpha, it only supports HTTP2. It doesn’t even support HTTP1,” he said.

He outlined a set of features that have to be there before it will be ready for production, including:

- Reliability semantics, such as retries, timeouts and circuit breaking.

- Request routing, programmatic control over how traffic is flowing.

- Security policy — “We have the primitives in place, but just haven’t done it yet,” he said.

Its roadmap includes proxy transparency, the ability handle all communication between services, not just HTTP; provide inter-service authentication and secrecy by default, extend the Kubernetes API to support a variety of real-world operational policies and support for environment-specific policy plugins.

Build-out of Conduit will have little effect on development, support and maintenance of Linkerd, Morgan said. It’s focused on integration for use in as many environments as possible and the ability to span environments.

Cloud Native Computing Foundation and Buoyant are sponsors of InApps.

Feature image via Pixabay.

Source: InApps.net

Let’s create the next big thing together!

Coming together is a beginning. Keeping together is progress. Working together is success.